Changelog | January, 2024

Changelog | January, 2024

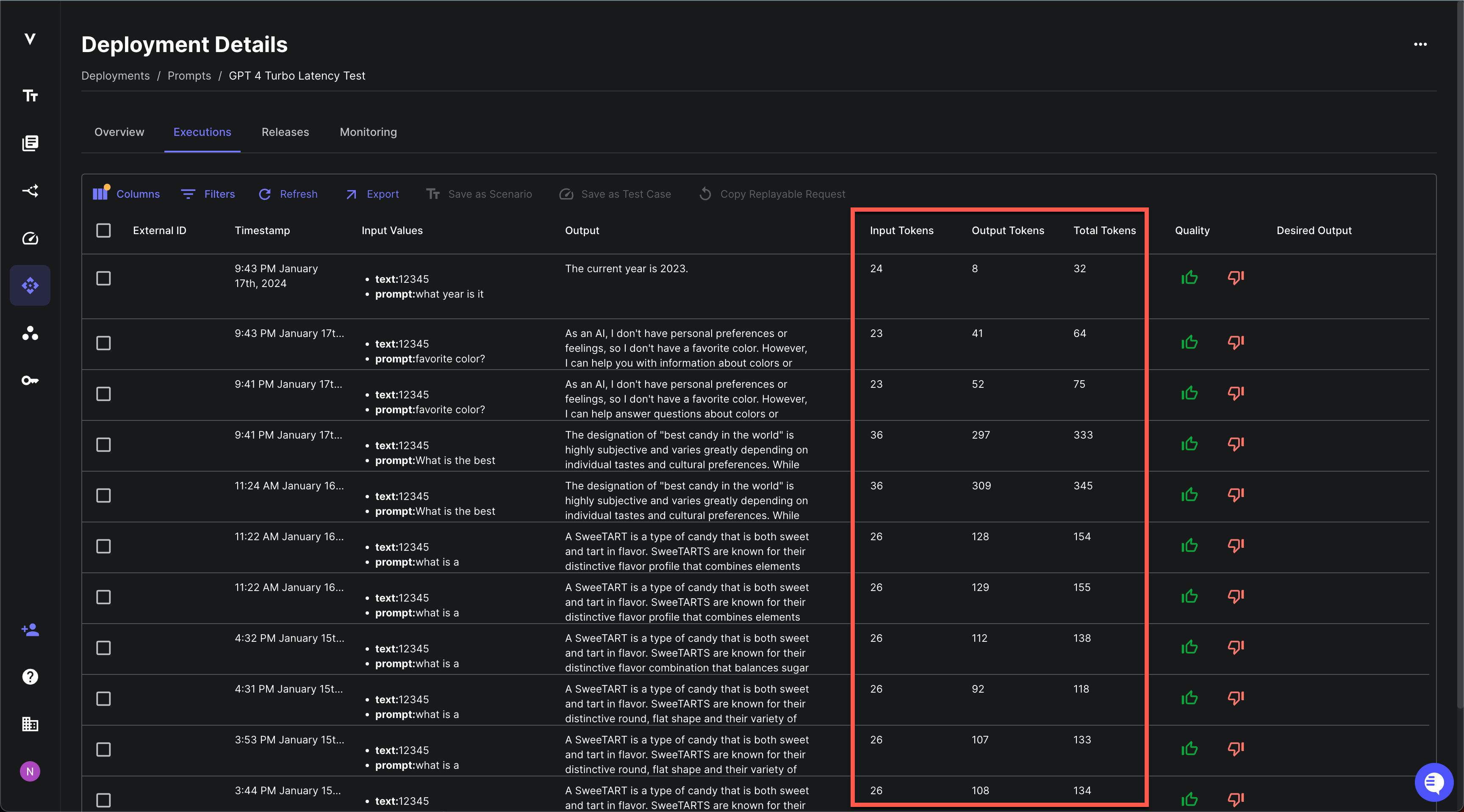

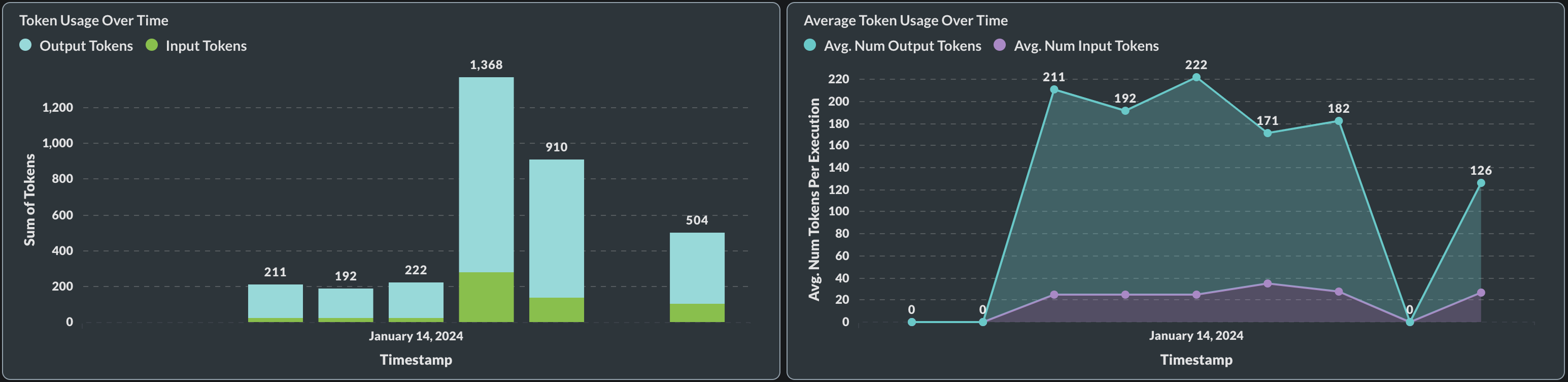

Prompt Deployment Usage Tracking

January 29th, 2024

Going forward, Vellum will now keep track of the token utilization of your Prompt Deployments. You can keep tabs on input, output, and total tokens used per request.

You can also view this data in aggregate in the Monitoring tab.

This is a precursor to more advanced usage and billing features coming down the road. If there’s more you’d like to see here, please share your feedback with us!

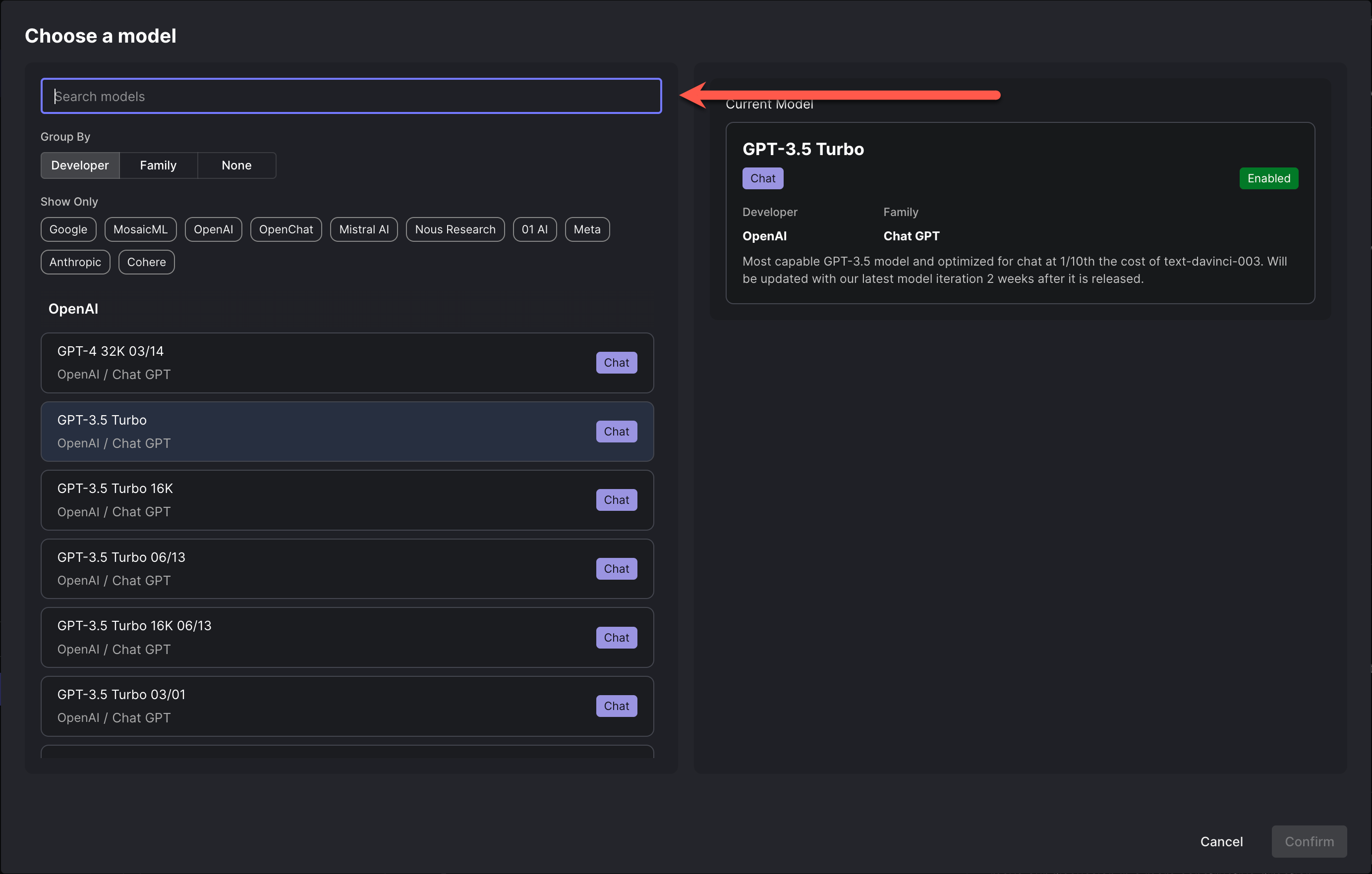

Model Search Bar

January 29th, 2024

As the number of models available in Vellum grows, it’s become harder to find the model you’re looking for. To help with this, we’ve added a search bar to the model selection dropdown in the Prompt and Workflow editors. This will make it easier to find the model you’re looking for, especially as we continue to add more models to the platform.

Single Editor Mode

January 25th, 2024

We’ve collaborated with our friends at velt.dev to deliver an all new “Single Editor Mode” in Prompt and Workflow Sandboxes. With this, only one person can edit a Prompt/Workflow at a time and you can hand off editing control to another collaborator. This is useful for avoiding conflicts when multiple people are trying to edit the same Prompt/Workflow at the same time. Check out the video below to see it in action!

API to Execute Workflow w/o Streaming

January 23rd, 2024

We’ve added a new API endpoint for executing a Workflow Deployment without streaming back its incremental results. This is useful when you want to execute a Workflow and only care about its final result or if you’re invoking your Workflow via a service that doesn’t support HTTP Streaming like Zapier.

Workflow Deployment Execution Visualization Improvements

January 22nd, 2024

Now, when visiting the details page for a Workflow Deployment Execution, you’ll find an improved loading state as well as a simplified view for Conditional Nodes.

Upload/Download of Function Definitions

January 18th, 2024

You can now easily import your existing function definition files (JSON or YAML) into Vellum function calling blocks as well as export functions you’ve already defined in Vellum to pass along to engineers for implementation. Check it out below!

Image Support for OpenAI Vision Models

January 18th, 2024

Vellum now has API support for interacting with OpenAI’s vision models, such as gpt-4-vision-preview.

You can learn more about OpenAI Vision models here. Note that there is

limited support for images in the Vellum UI at this time, but you can still use the API to interact with OpenAI Vision models.

UI support coming soon!

Here’s a quick example on how to send an image to the model, using our python sdk:

Folders

January 12th, 2024

You can now organize entities in Vellum via folders! You can nest folders, share them by url, and move entities between folders.

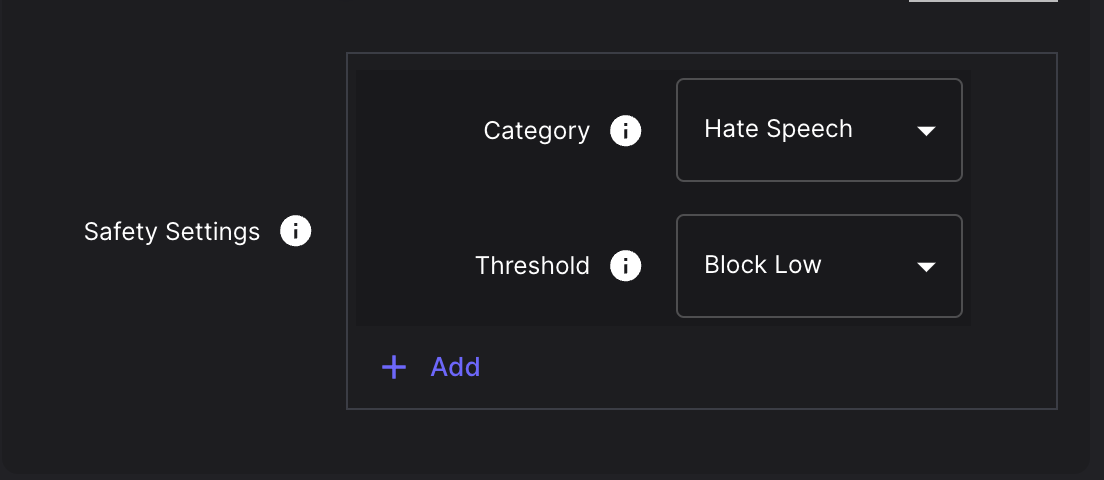

Support for Google Gemini Safety Settings

January 12th, 2024

There is now native support for setting the safetySetting parameters in Google Gemini prompts. You can learn more

about how these parameters are used by Google in their docs here.

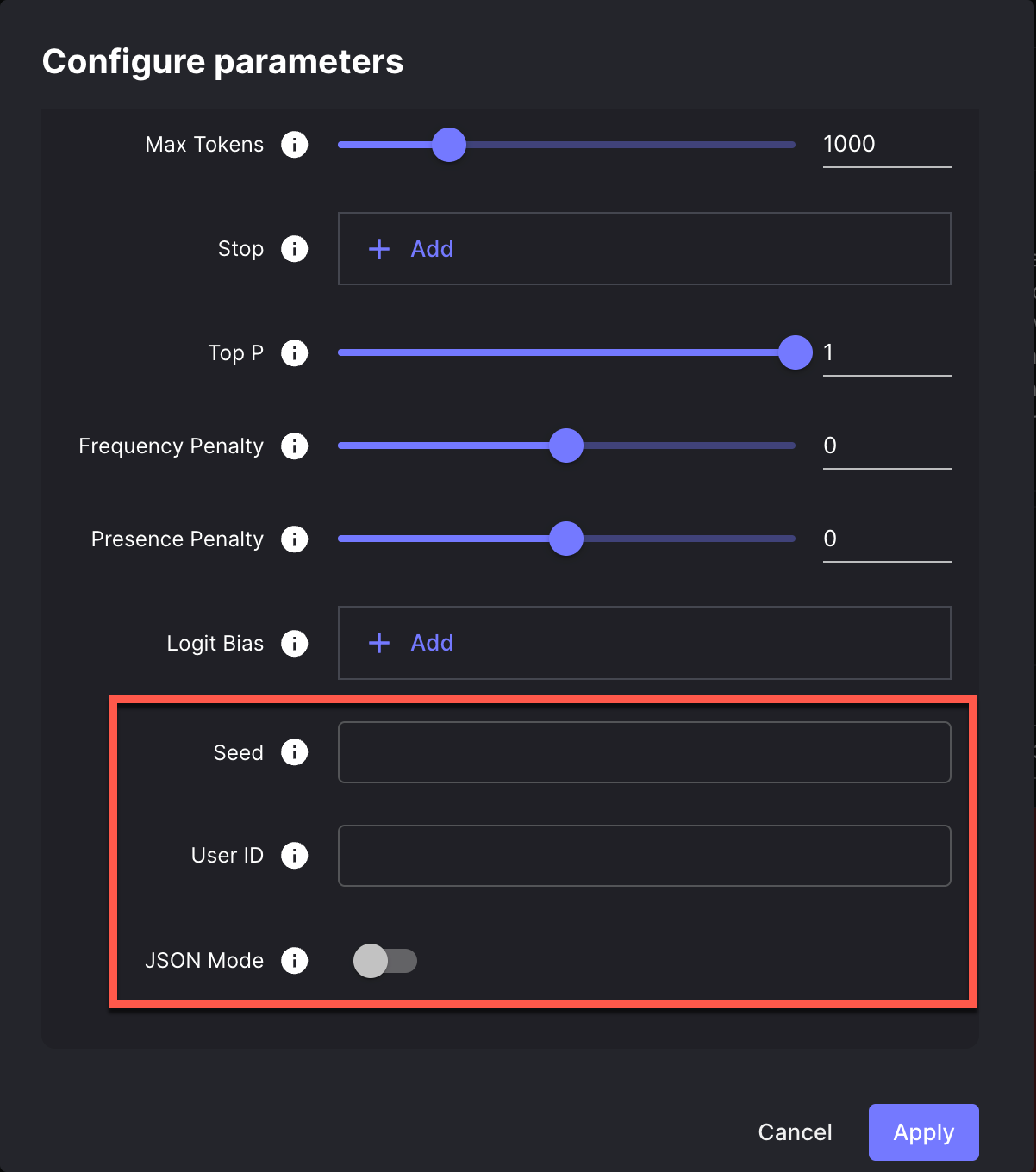

Support for OpenAI JSON Mode, User ID, and Seed Params

January 11th, 2024

There is now native support for setting the user and seed parameters in OpenAI API requests, as well as specifying

that the response format be of type JSON. You can learn more about how these parameters are used by OpenAI in

their docs here.

Cloning Workflow Scenarios

January 9th, 2024

You can now clone a Workflow Scenario to create a new Scenario based on an existing one. This is useful when you want to create a new Scenario that is similar to an existing one, but with some changes.

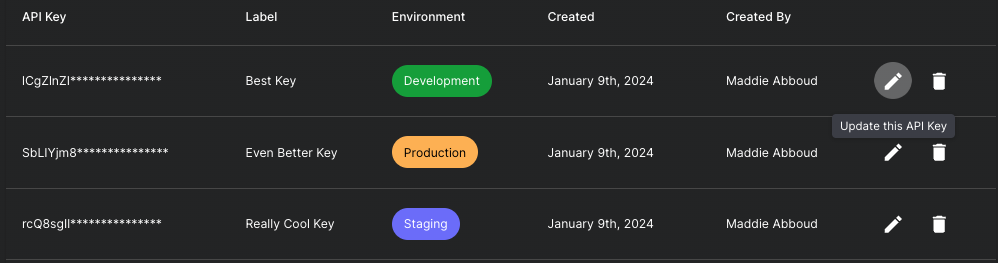

API Key Metadata

January 9th, 2024

Now you can add and view metadata for your Vellum API keys. For example, you can see when an API key was created and by whom. You can also assign a label to an API key to help you keep track of its purpose and an environment tag so that you know where it’s used.

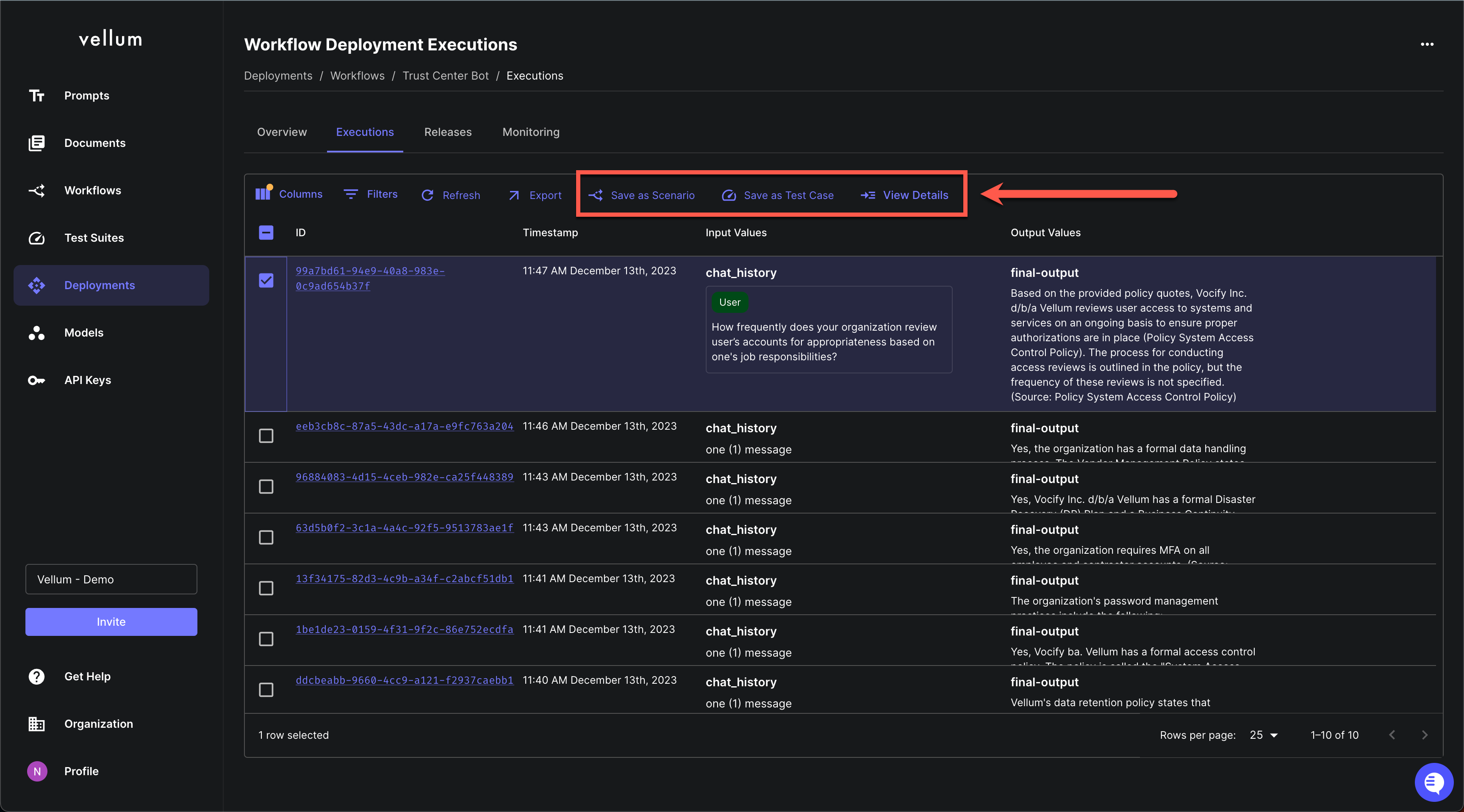

Top-Level Workflow Execution Actions

January 4th, 2024

You can now find the following actions at the top-level of the Workflow and Prompt Deployment Execution pages:

- Save as Scenario: Useful for saving an edge case seen in production as a Scenario for qualitative eval.

- Save as Test Case: Useful for saving an edge case seen in production to your bank of Test Cases for quantitative eval.

- View Details: Drill in to see specifics about that specific Execution.

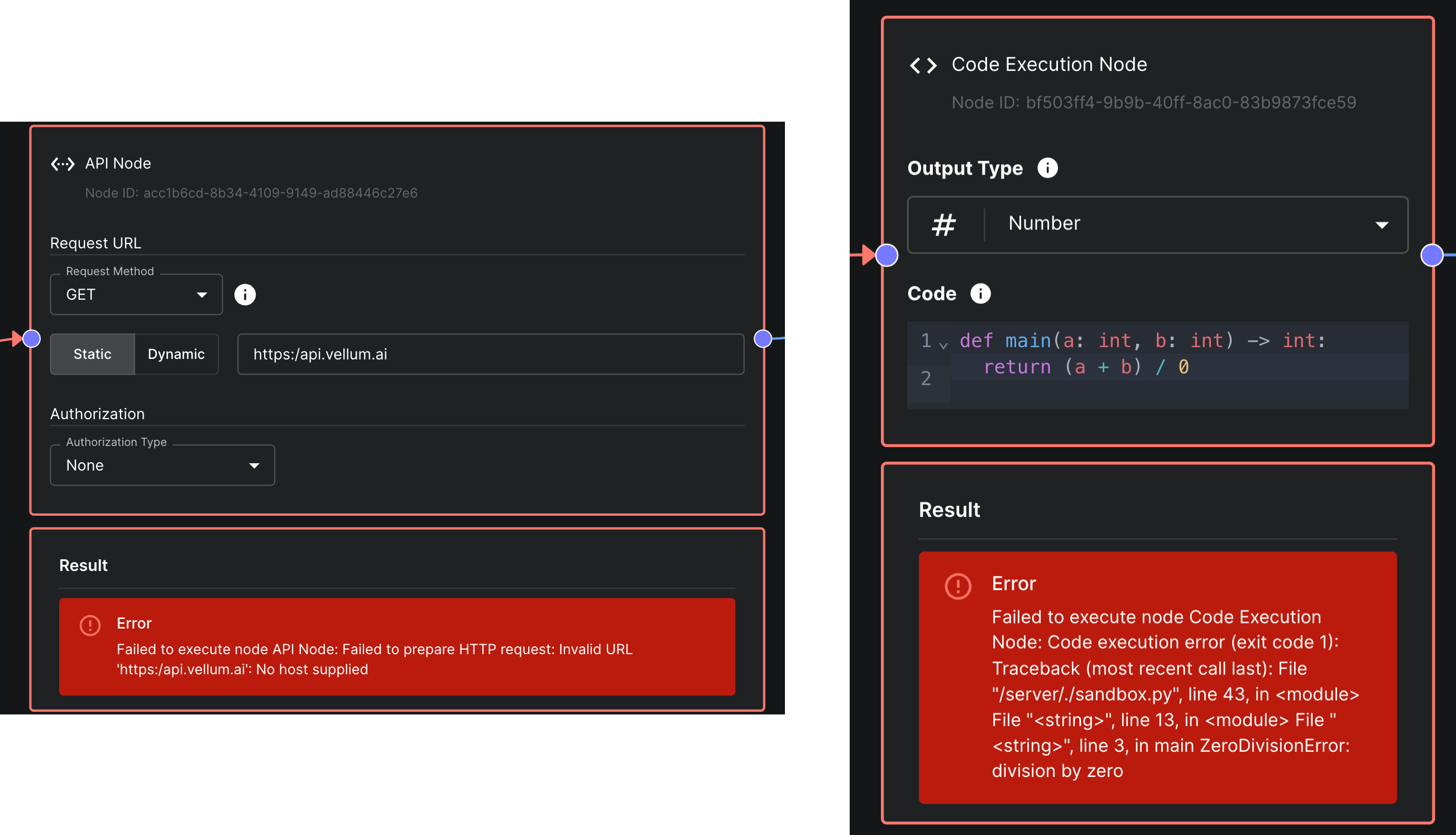

Improved Error Messages in Code & API Nodes

January 2nd, 2024

API Nodes and Code Nodes within Workflows now have improved error messages. When an error occurs, the error message will now include the line number and column number where the error occurred. This will make it easier to debug errors in your Workflows.