Changelog | April, 2024

Changelog | April, 2024

Changelog | April, 2024

April 30th, 2024

Gemini 1.5 Pro is now available in Vellum.

You can add it to your workspace through the models page.

April 30th, 2024

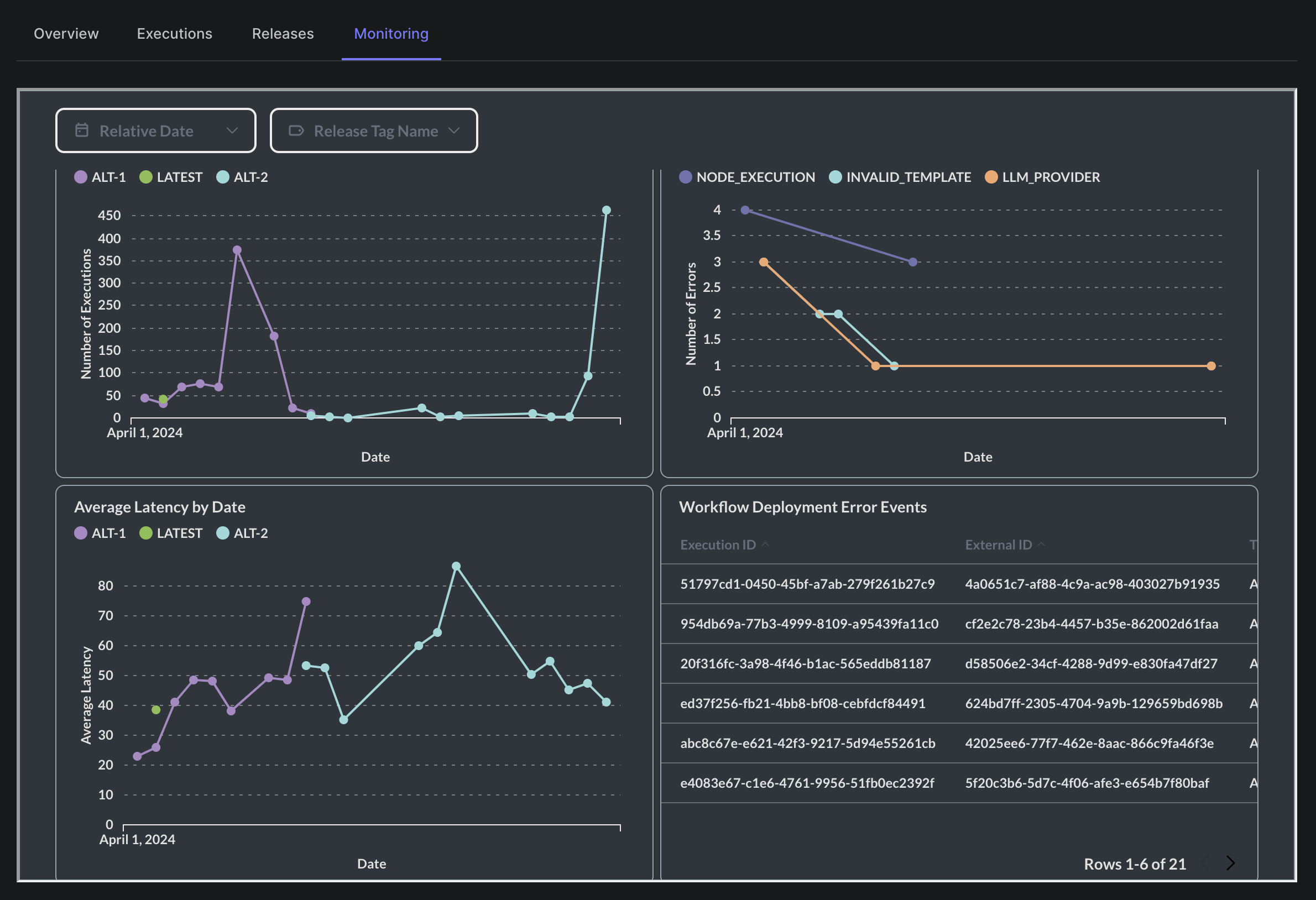

We’ve added new functionality to the monitoring tab on workflow deployments. It’s now possible to see a breakdown of executions by the release tag used, and further filter down based on a specific release tag.

April 30th, 2024

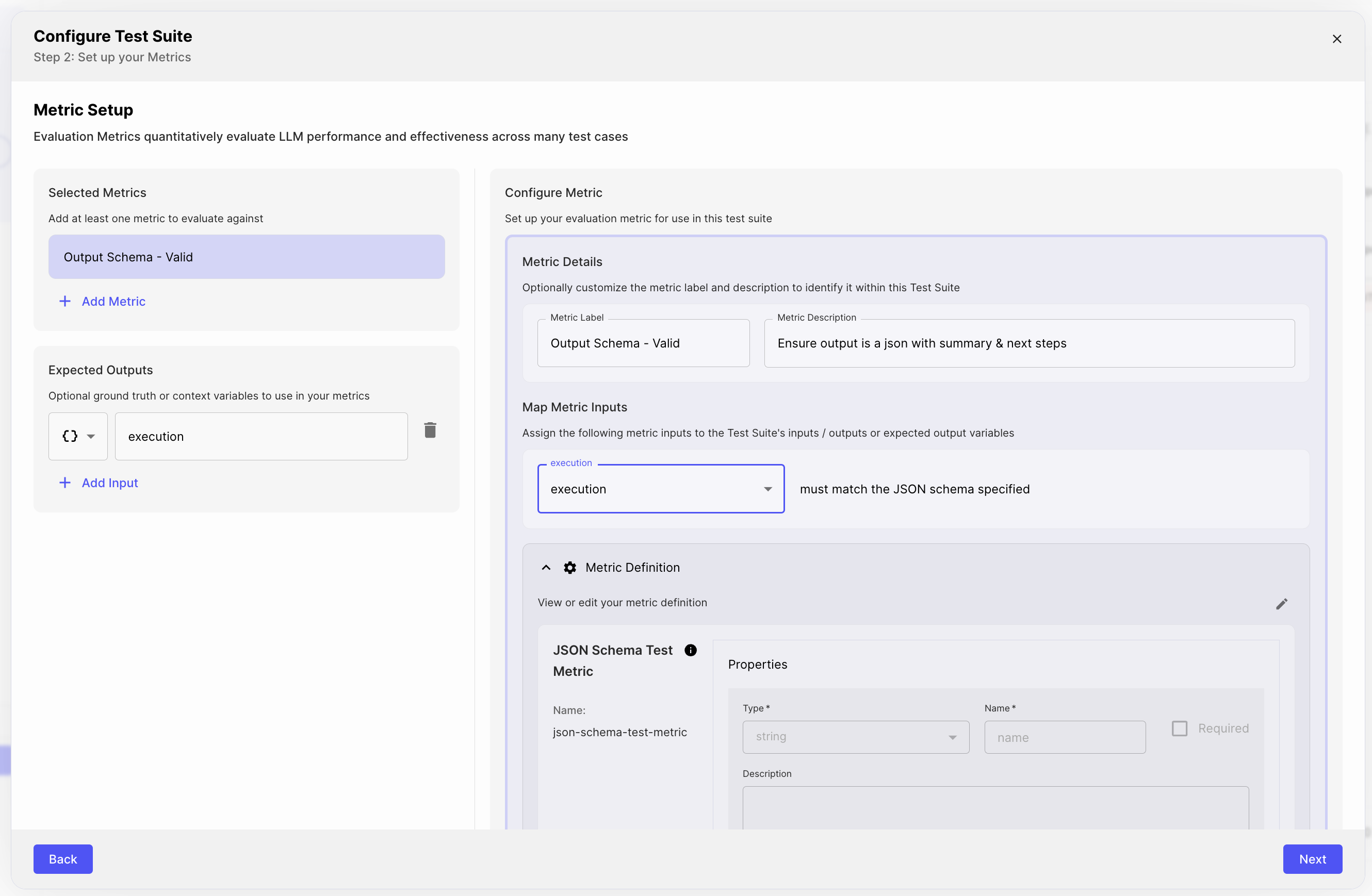

Introducing Reusable Metrics!

Metrics can now be shared across your Test Suites making it easier for you to consistently test and evaluate your Prompt / Workflow quality. Define a suite of Custom Metrics tailored to your business logic and use-case to save time and ensure standardized evaluation criteria.

April 30th, 2024

Prompts can now be be broken down into multiple sections and organized using “blocks.” Prompt blocks can be reordered, and toggled on or off.

Splitting your Prompt into multiple blocks can make it easier to navigate complex Prompts and help you focus on iterating on specific sections. Check out the demo below to see how it works!

April 29th, 2024

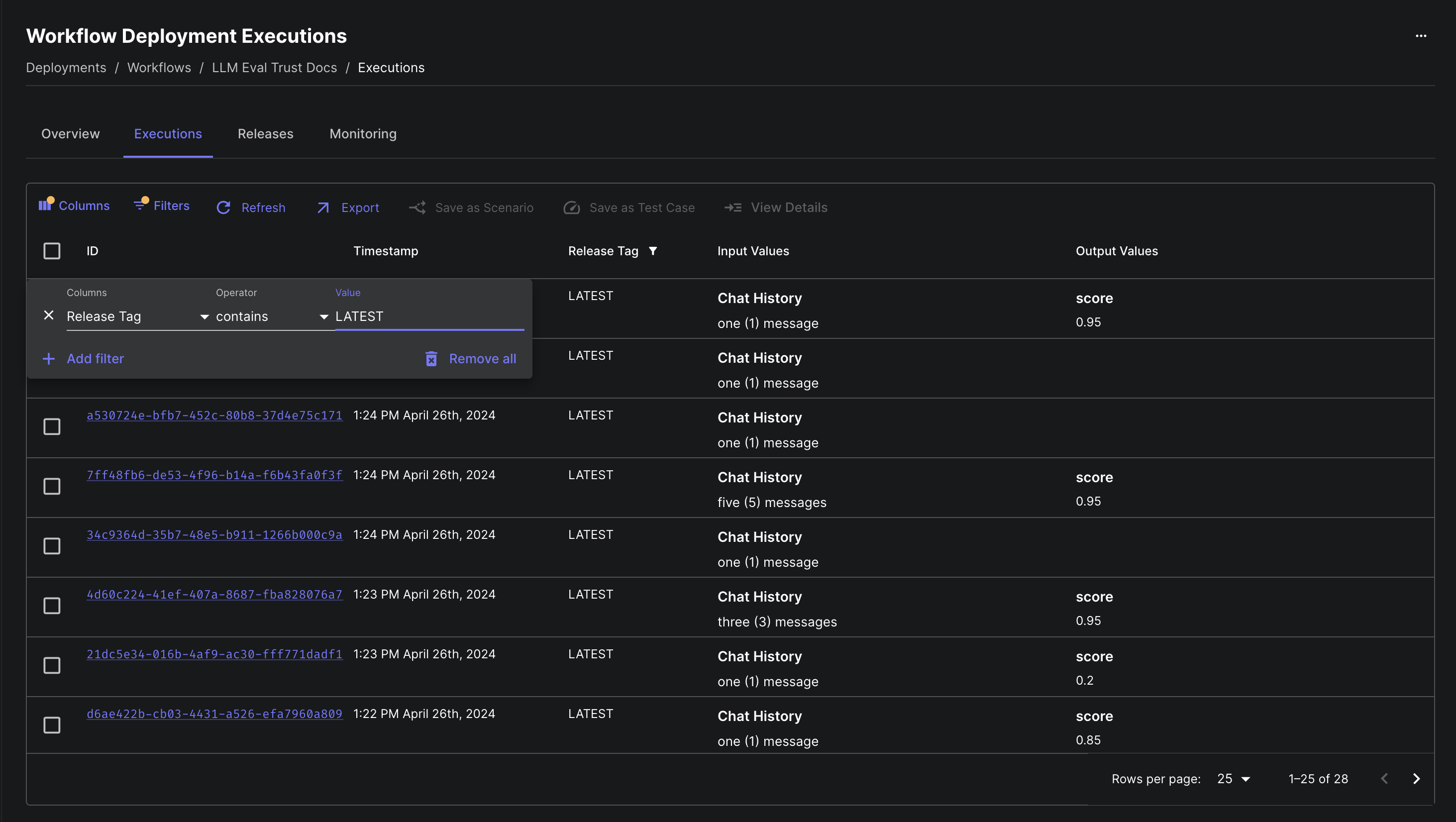

It’s now possible to filter workflow deployment executions by the release tag used when executing the workflow.

This can be very useful for monitoring differences between releases of a deployment. Are you still using an older release in production? Are executions of your new release behaving as expected?

April 26th, 2024

The executions tab of the workflow deployments page now fetches historical executions much faster. This tab is a great way to see how your customers are actually using your deployments.

In our test for deployments with over 200k executions, data now loads in under 4 seconds instead of the previous 15+ seconds - a 4x speed improvement.

April 25th, 2024

Vellum’s Evaluation framework can now be used to test arbitrary functions defined in your codebase – not just Prompts and Workflows managed by Vellum.

For example, you might test a prompt chain that lives in your codebase and that’s defined using another third party library. This can be particularly useful if you want to incrementally migrate to Vellum Prompts/Workflows, but ensure that the outputs remain consistent.

For a detailed example of how to use Vellum’s evaluation framework to test external functions, see the python example here.

April 24th, 2024

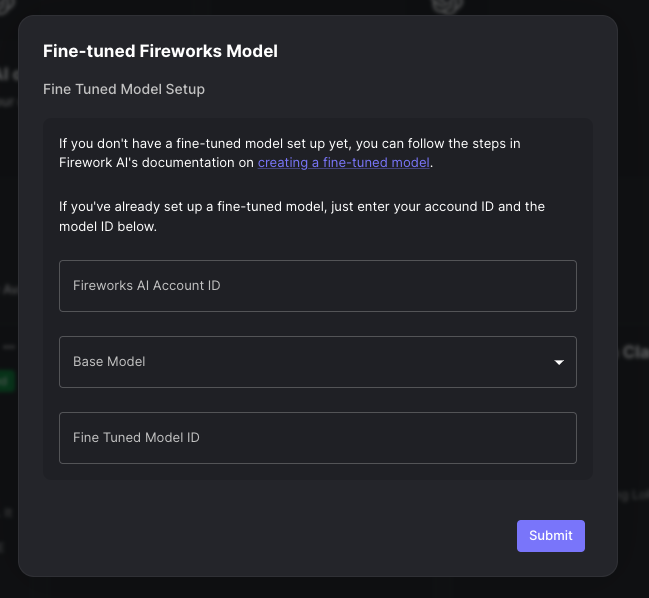

Vellum now supports models that you’ve fine-tuned on Fireworks AI. You can add your fine-tuned Fireworks model by navigating to the Models page and clicking on the featured model template at the top.

Note that only the Mistral family of models are supported currently. If there are other base models that you would like to see supported, please reach out to us!

April 23rd, 2024

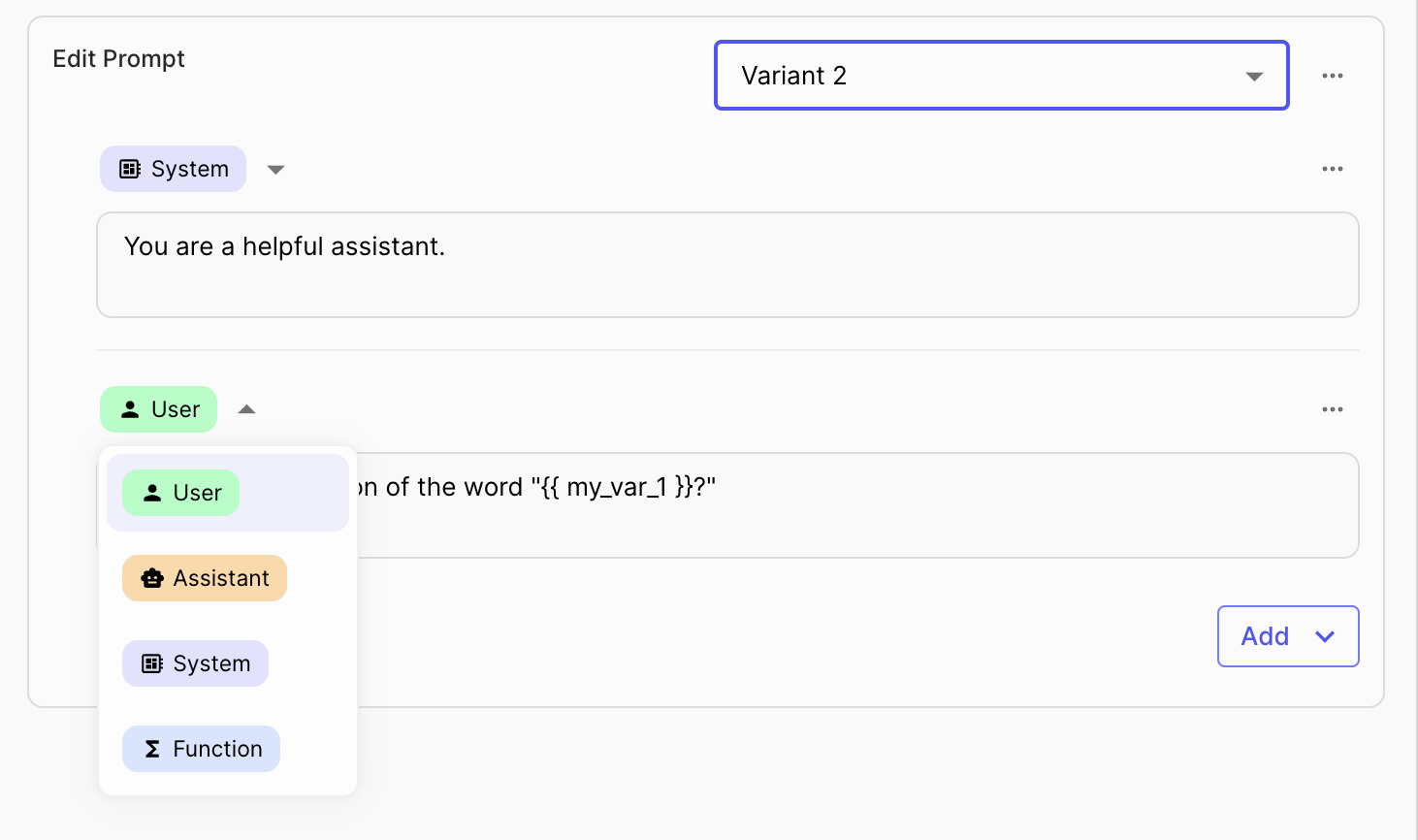

We’ve updated the prompt editing UI throughout Vellum. You’ll see the new look in the Prompt Editor, Comparison Mode, Chat Mode, Prompt Nodes in Workflows, and Deployment Overviews. This is the first in a series of exciting improvements to the prompt editing experience that will be rolling out over the coming weeks and months.

April 23rd, 2024

The API for upserting a Prompt Sandbox Scenario now requests and responds with schemas that are more consistent with

other Vellum APIs, using discriminated unions for improved type safety. This API is available on version 0.4.0 of

our SDKs.

You can find the API documentation for it here.

April 23rd, 2024

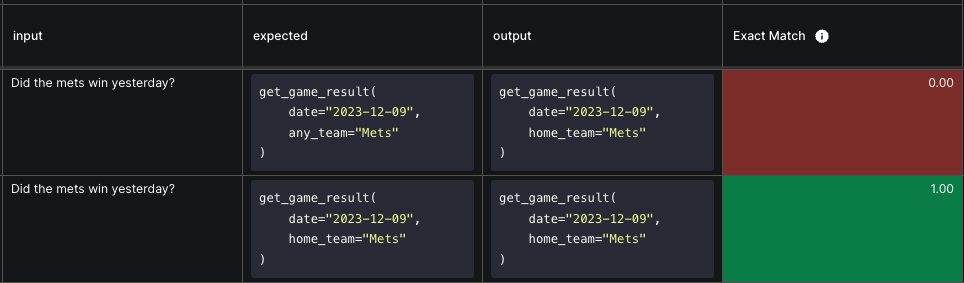

Workflows support Function Call values as a valid output type. Because these function calls often come from models, it is valuable to have evaluations on these workflows that ensure that the function call output is what we expect. Test suites in Vellum now support specifying test case input and evaluation values.

April 19th, 2024

The following models are now available in Vellum:

They can be added to your workspace through the models page.

April 18th, 2024

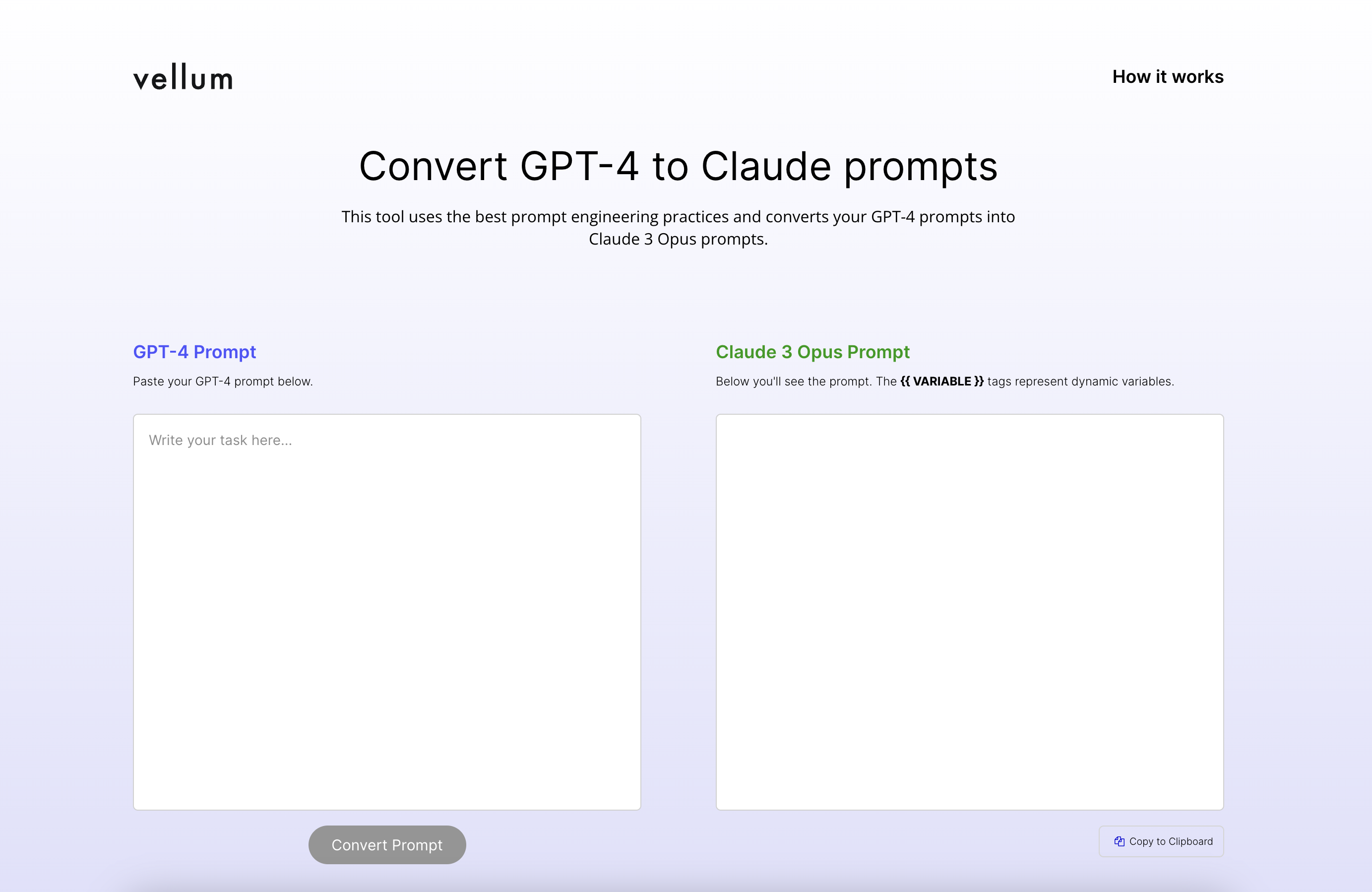

If you’ve been using GPT models, you’ve likely relied on prompt engineering tips that worked well for those models. But when you apply the same prompts to Claude 3 Opus, you might notice they don’t perform as expected.

This happens because Claude 3 Opus is trained using different methods and data, so the way you prompt it differs from how you would prompt GPT-4. We have some helpful tips in our guide, but as of today, you can convert your prompts even faster…

We’ve released a free tool for that allows you to paste your GPT-4 prompt and get an adapted Claude 3 Opus prompt with suggestions for dynamic variables. You can try the tool here.

If you don’t have a working GPT-4 prompt but need to create a prompt for Claude 3 Opus from scratch, you can use our second new free tool – “Claude Prompt Generator.”

This generator lets you input your 'prompt objective' and creates a suitable prompt for Claude 3 Opus, with

suggestions for dynamic variables that you should include. You can try the tool here.

April 10th, 2024

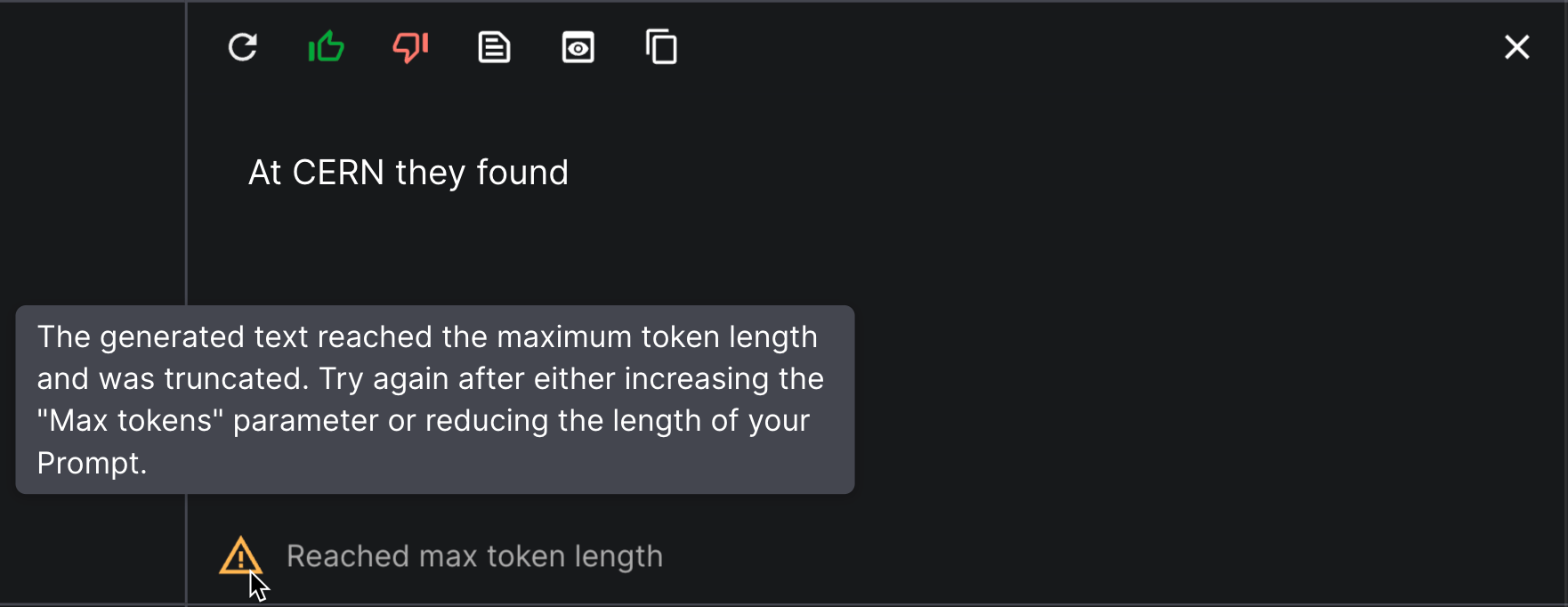

When iterating on a Prompt in Vellum’s Prompt Sandbox, you may find that its output stops mid-sentence. This is often because the “Max Tokens” parameter is set too low, or the prompt itself is too long. To help you identify when this is the case, we’ve added a warning that will appear when this max is hit.

April 9th, 2024

OpenAI’s newest GPT-4 Turbo model gpt-4-turbo-2024-04-09 is now available in Vellum!

April 9th, 2024

We have added the ability for you to track model host usage from the execute-prompt API. This API update is available on version 0.3.21 of our SDKs.

You can also now view model host usage in the Prompt Sandbox by enabling the “Track Usage” toggle in your Prompt Sandbox’s settings.

![]()

April 8th, 2024

We have a new API available in beta for listing the Test Cases belonging to a Test Suite at GET /v1/test-suites/{id}/test-cases.

This API is available on version 0.3.20 of our SDKs.

April 5th, 2024

Prompt Sandboxes have an entirely new view mode: Prompt Editor. It’s a dedicated space for iterating on a single Variant and Scenario. All of the features you need to work quickly are easily accessible, and collapsible sections make it simple to free up screen space. There are even more improved experiences and exciting coming down the pike for Prompt Editor, and many of those improvements will make their way into Comparison and Chat Modes, as well.

April 5th, 2024

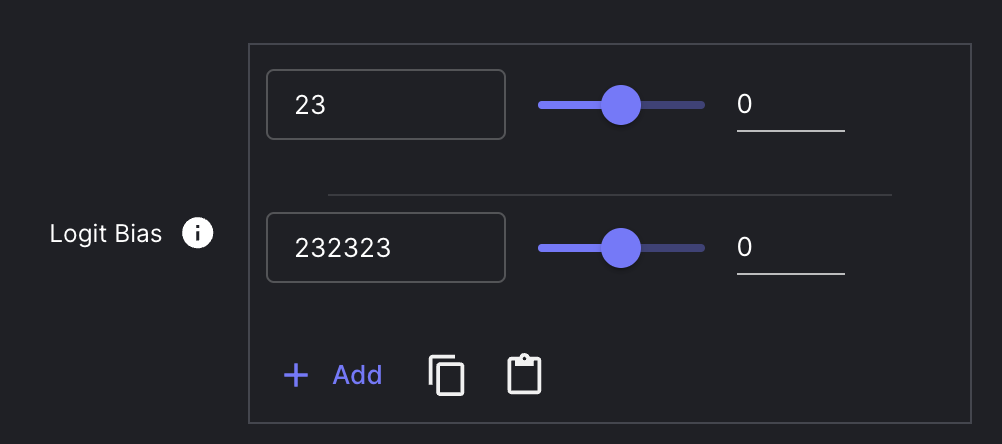

You can now copy logit bias parameters from one Prompt Variant and paste them into another Prompt. This works in both Prompt Sandboxes and Prompt Nodes within Workflows.

April 4th, 2024

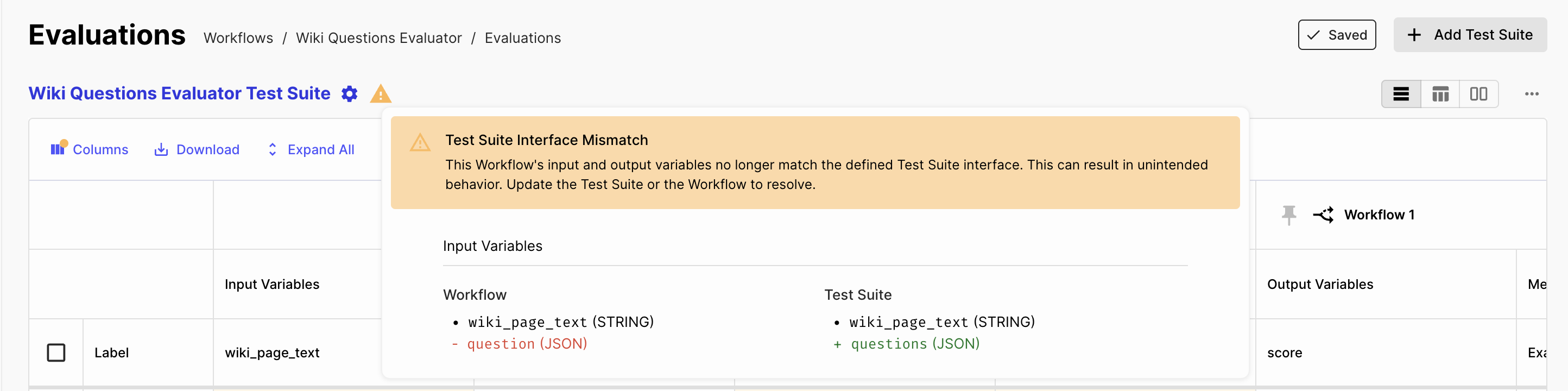

We’ve made some changes to our Test Suite UX. Here’s what’s new:

April 3rd, 2024

We have two new APIs available in beta for accessing your Test Suite Runs:

GET /v1/test_suite_runs/{id}GET /v1/test_suite_runs/{id}/executionsThese APIs are available on version 0.3.15 of our SDKs.