Changelog | June, 2024

Changelog | June, 2024

Changelog | June, 2024

June 27th, 2024

Often times when designing a workflow you need to iterate over an array and run the same operation on each item. Previously this was only accomplishable by manually creating the loop by connecting Nodes in a tedious layout.

To make this process easier, we are now introducing Map Nodes! Map Nodes work in the same way that array map functions do in many common programming languages. The Nodes take a JSON array as an input and iterate over it, running a Subworkflow for each item. The Subworkflow is provided with three input variables for the iteration item, index and the array. The output of every Subworkflow is then combined into a single array as a Node output. Map Nodes also support up to 12 concurrent iterations.

June 26th, 2024

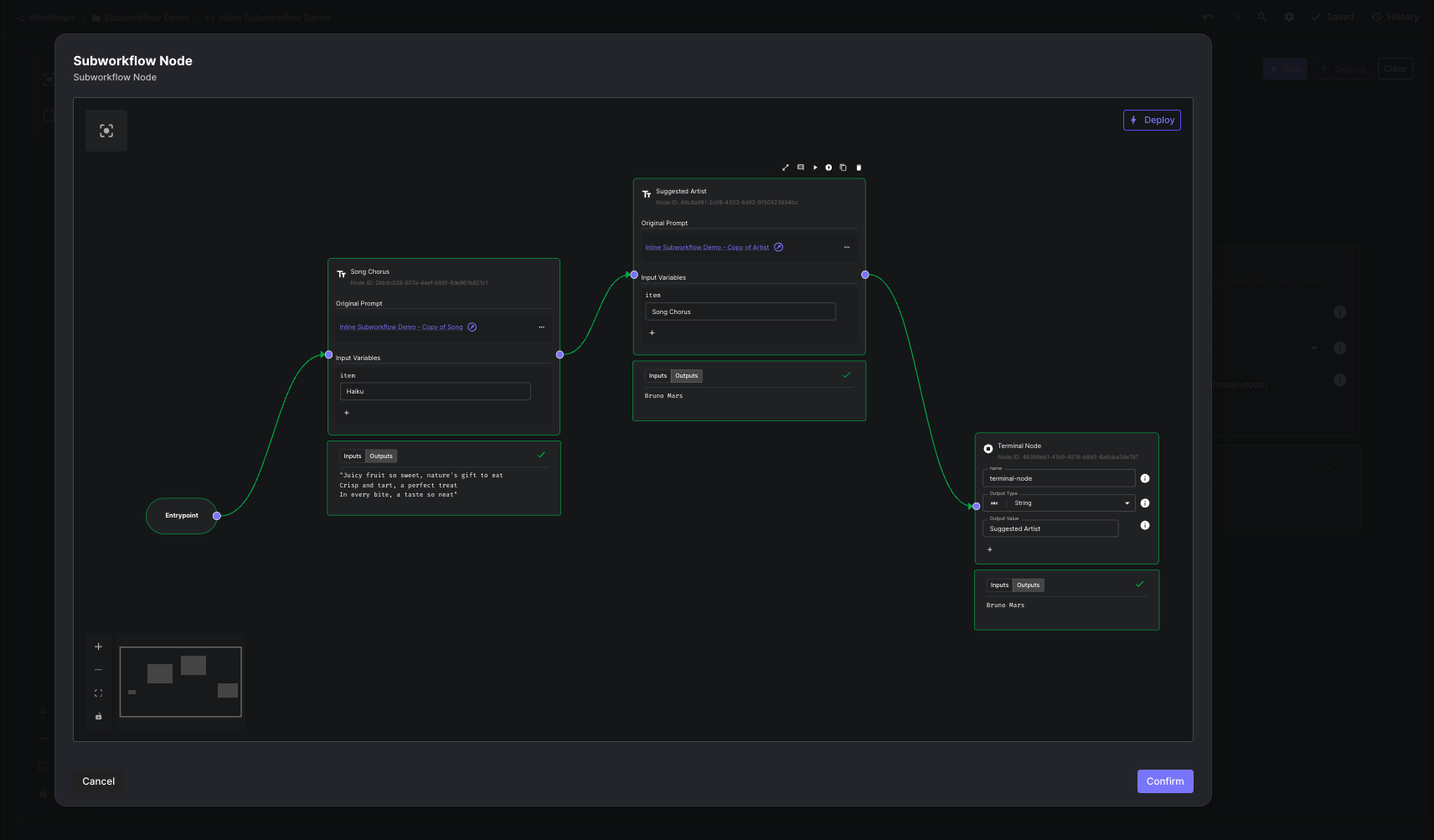

Subworkflow nodes are a powerful node within Vellum Workflows that allow users to create reusable units of node logic. However up until now, they necessitated developing the Workflow in a separate Sandbox, and for that Workflow to be deployed in order to reference it in a particular Workflow.

Today, we are releasing Inline Subworkflows! They empower users to create and group together modular units of nodes directly within the context of an existing Workflow. The node spawns its own editor and supports similar UX as the parent Workflow such as all existing nodes and copy/paste.

For more details, check out our new help center doc.

June 20th, 2024

We now support the new Claude 3.5 Sonnet model. It has already been automatically added to all workspaces.

We also support the model hosted through AWS Bedrock. You can add it to your workspace from the models page.

June 13th, 2024

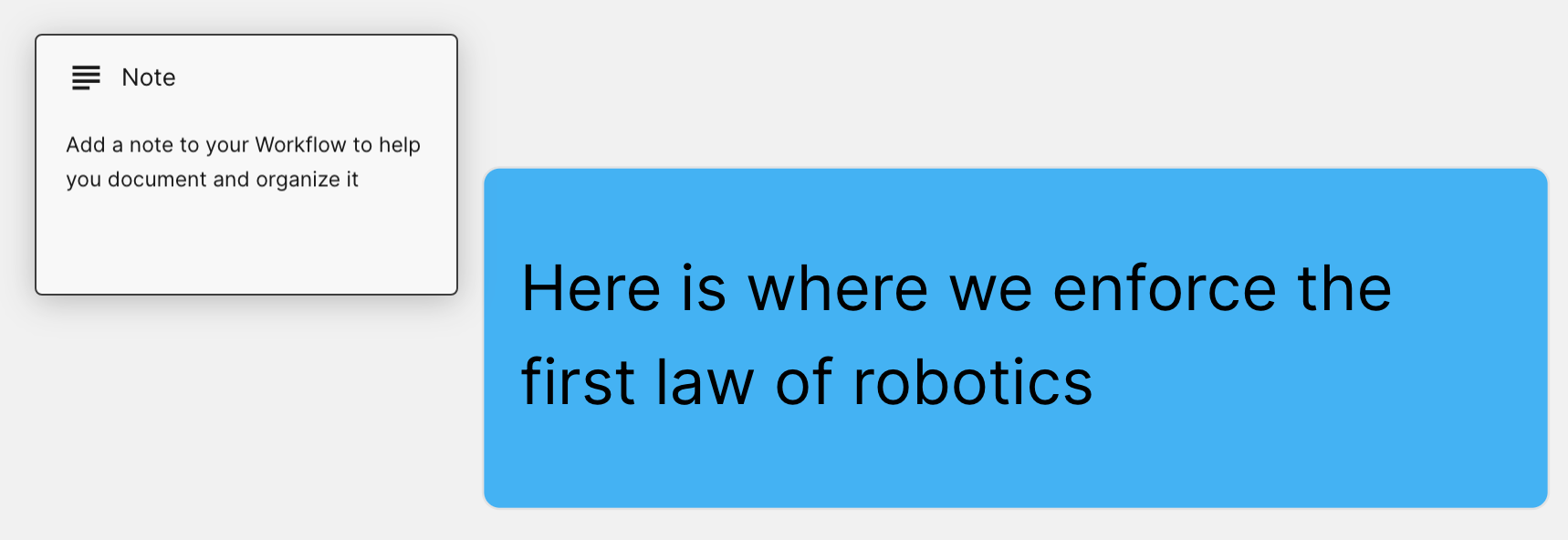

To help you organize and document your Workflows we’ve added Workflow Notes with customizable colors and font sizes. You can find Workflow Notes in the Workflow Nodes drag and drop selector.

June 13th, 2024

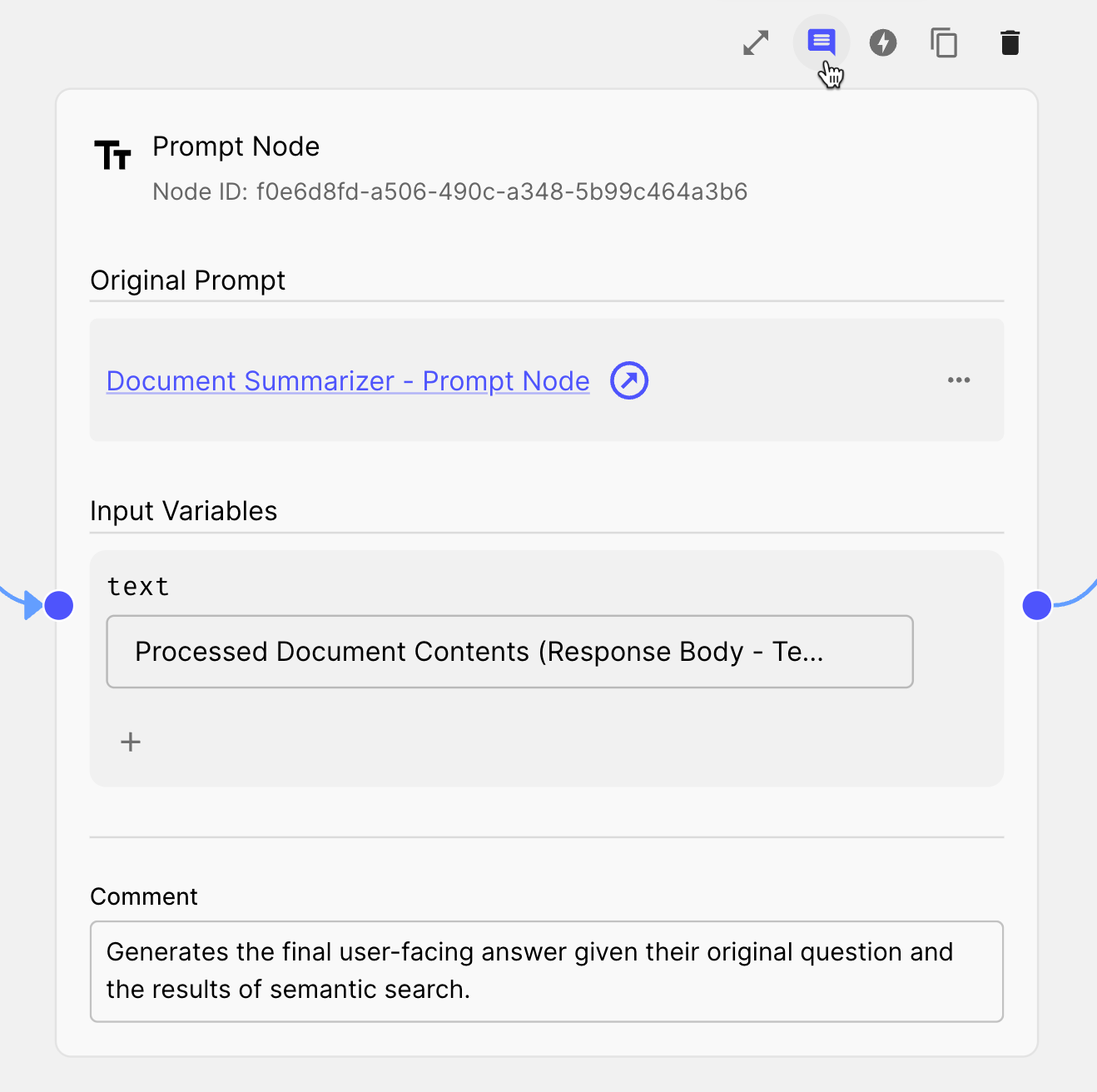

You can now add a comment to any Workflow Node to help you document your Workflow’s logic. To add a comment click the chat bubble icon on the top right of the Node to open up the comment section.

June 11th, 2024

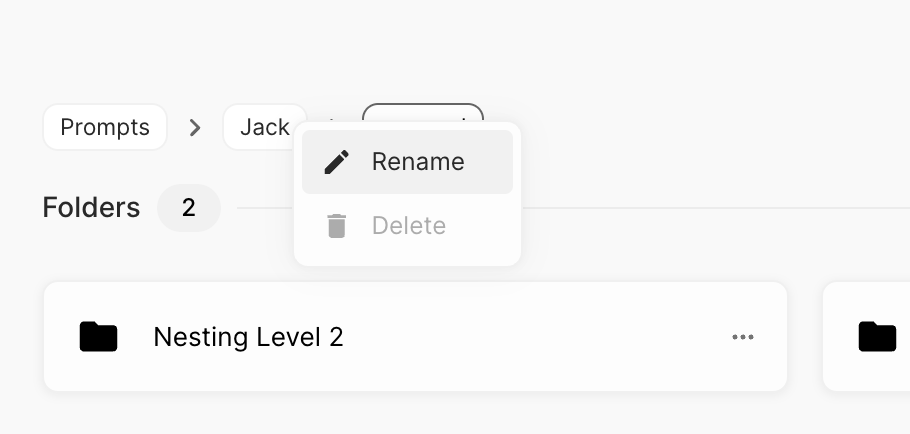

You’ll now see breadcrumbs that show the folder path whenever visiting the details of an entity in Vellum. This is helpful for seeing the file structure and easily navigating up to a parent folder.

With this, you can also rename a parent folder by right-clicking on its breadcrumb rather than having to first navigate to its parent.

Lastly, can also now access all of an entity’s “More Menu” options by right-clicking its card when on the entity’s grid view.

June 10th, 2024

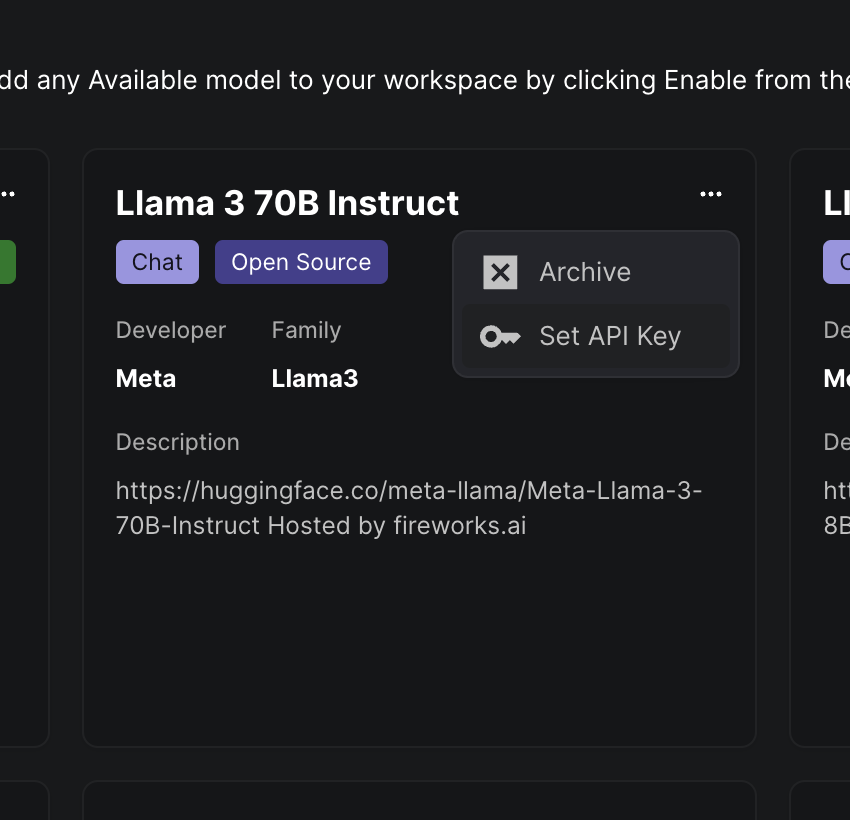

You can now provide your own API keys for models that Vellum provides API keys for such as Fireworks hosted models. To do so, click the 3 dot menu on a Model card and click the “Set API Key” option.

June 7th, 2024

Made a mistake while editing a workflow you want to undo? Good news, you can now undo and redo from within Workflow Sandboxes by using keyboard shortcuts or by clicking the new undo and redo buttons.

June 7th, 2024

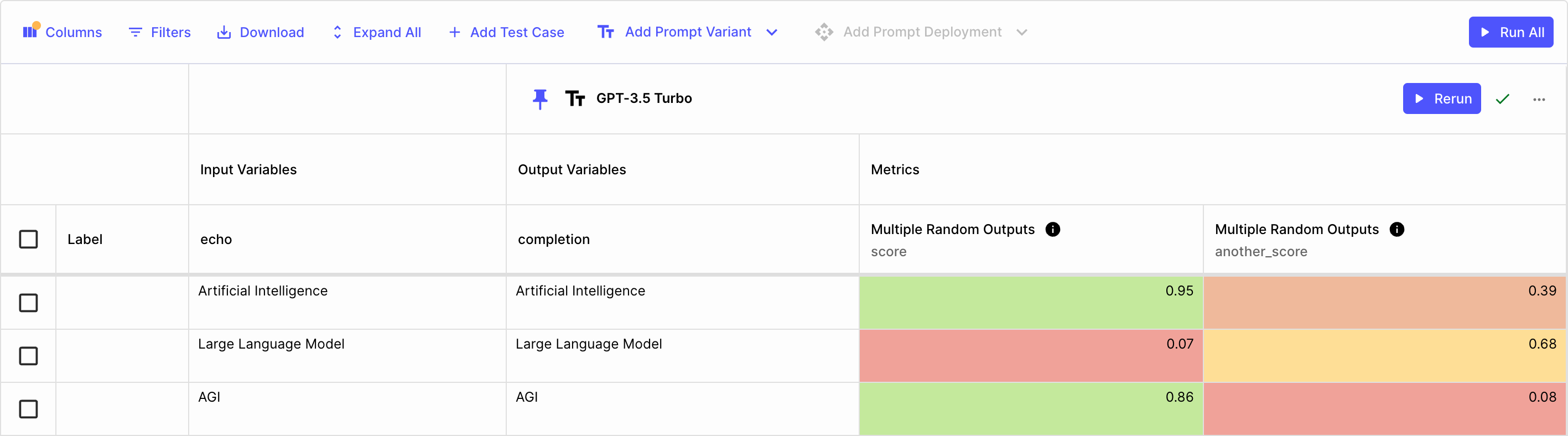

Using Vellum Workflows to power custom LLM Metrics (i.e. have one AI grade another AI) is super powerful, but to date,

you’ve only been able to use Workflows that produce a single score output.

We now have official support for Workflow Metrics that produce multiple outputs! As long as the Workflow used as

your Metric has at least one Final Output Node of type NUMBER named score, you can add as many additional Final Output Nodes

with custom names and types as you like.

All outputs are shown when the Metric is used in an Evaluation Report.

Note: only the score output is aggregated and shown in the aggregate view.

June 6th, 2024

For a while now you’ve been able to programmatically upsert and delete Test Cases in a Test suite individually.

However, this can be problematic if you want to operate on many Test Cases at once. To solve this, we’ve added an API to create, replace, and delete Test Cases in bulk.

Check out the new Bulk Test Case Operations API in our docs here.

Note: this API is available in our SDKs beginning version 0.6.4.

June 5th, 2024

To follow up the release of APIs for programmatically deploying Prompts and Workflows, we’re excited to also announce APIs for programmatically moving Release Tags.

With these APIs, you can create a CI/CD pipeline that automatically moves a Release Tag for one environment from one version of a Prompt/Workflow to another.

For example, you might run certain tests or QA processes before promoting STAGING to PRODUCTION.

To move a Prompt Deployment Release Tag, check out the API docs here.

To move a Workflow Deployment Release Tag, see the API docs here.

Note: these APIs are available in our SDKs beginning version 0.6.3.

June 5th, 2024

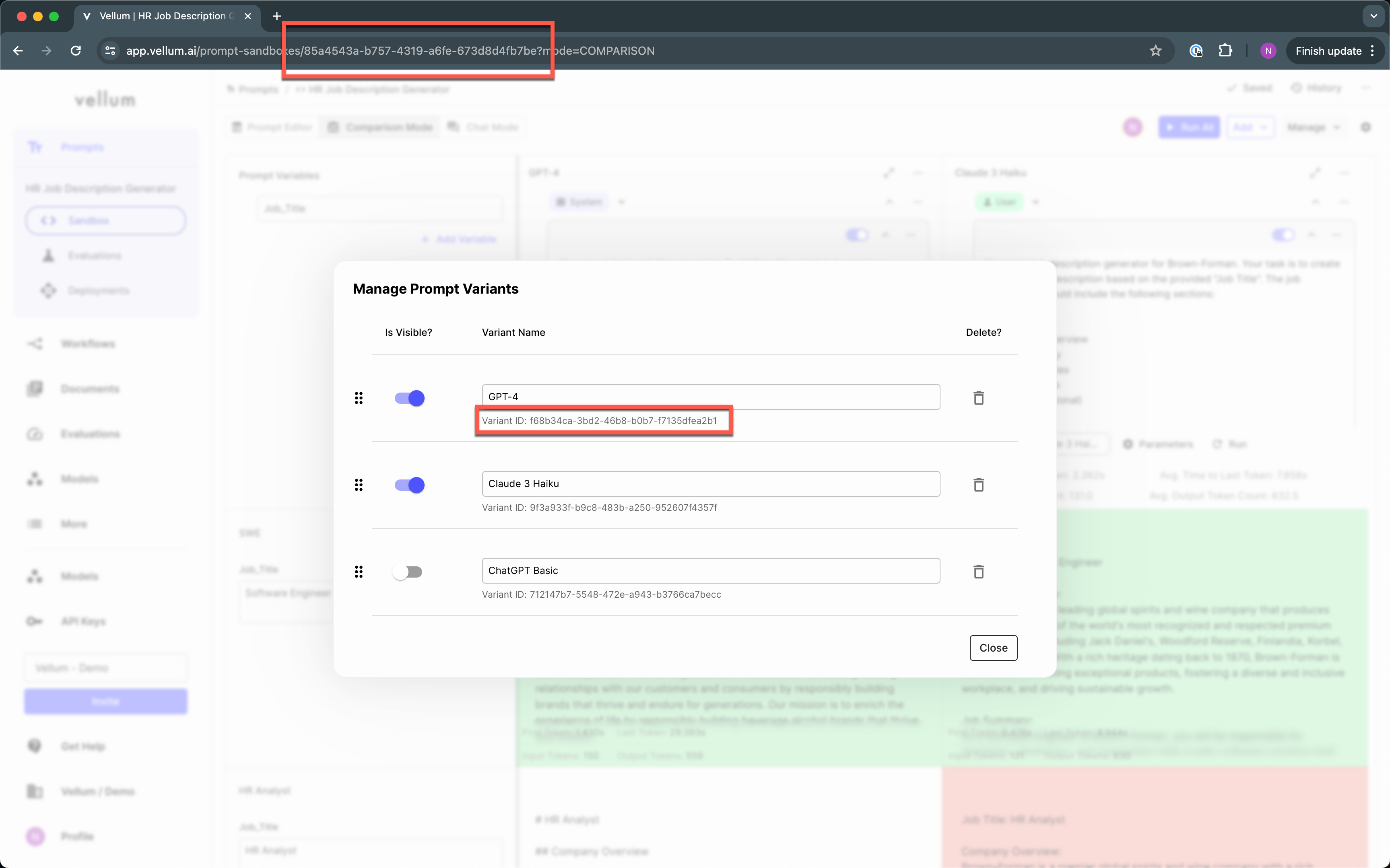

Thanks to the desires of a few very forward-thinking customers, we now have APIs to support programmatically deploying prompts and workflows. These APIs can be used as the basis for CI/CD pipelines for Vellum-managed entities.

We’re super bullish on integrating Vellum with existing release management systems (think, Github Actions) and you can expect to see more from us here, soon!

To deploy a Prompt, you’ll need the IDs of the Prompt Sandbox and the Prompt Variant shown here:

And can then hit the Deploy Prompt endpoint found here.

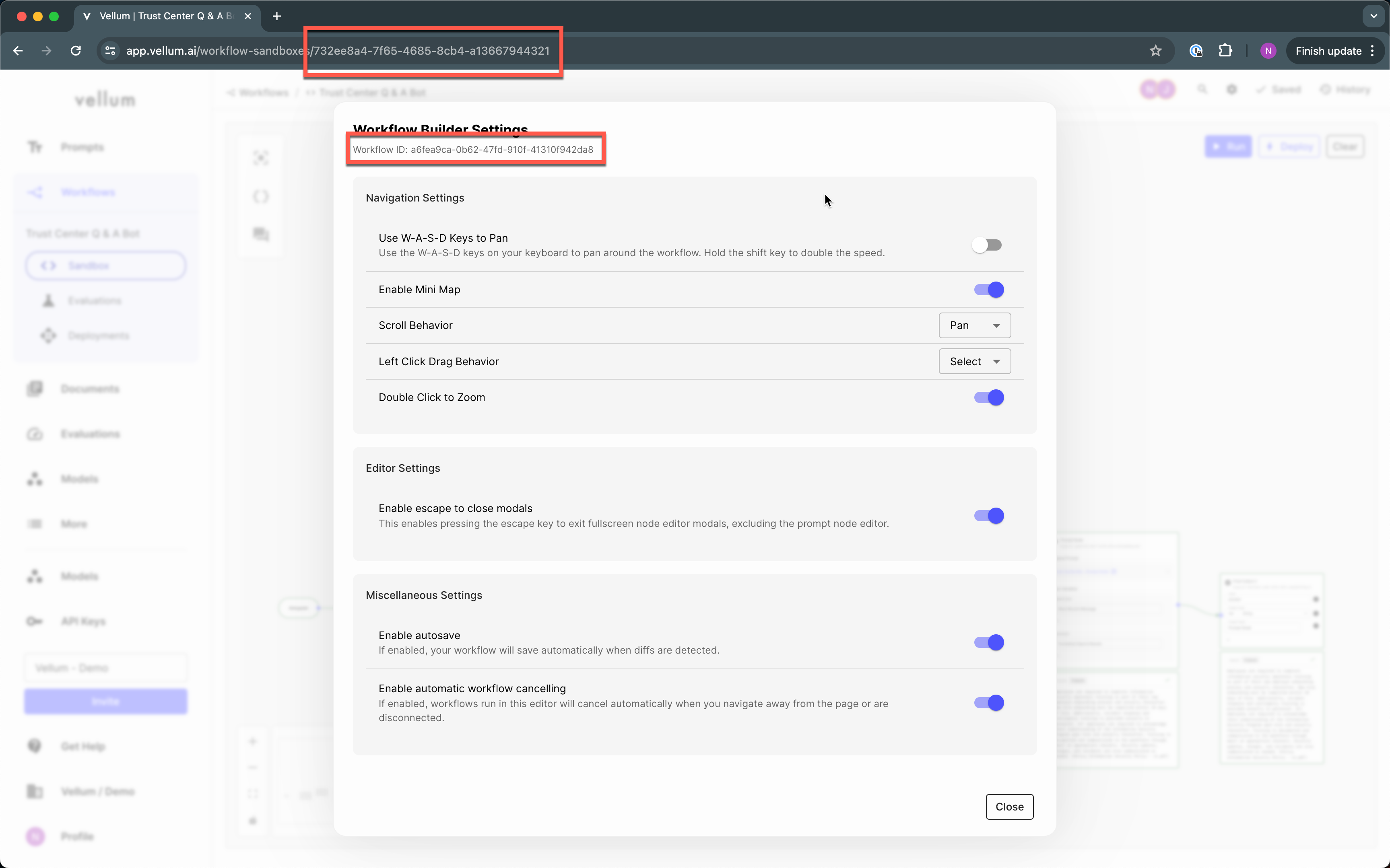

Similarly, to deploy a Workflow, you’ll need the IDs of the Workflow Sandbox and the Workflow shown here:

And can then hit the Deploy Workflow endpoint found here.

Note: these APIs are available in our SDKs beginning version 0.6.3.

June 3rd, 2024

Until now, GPT-4 was the only multi-modal family of models supported in Vellum that let you parse images and return text.

Vellum now also supports multi-modality for Claude 3 and Gemini models. This means you can now use Vellum’s prompt comparison UI and normalized API layer to compare and easily swap between multi-modal models.

This is particularly useful if you’re trying to extract text from images, classify pictures, and more, and need to find the best model for your specific use-case.

For more on how to work with images in Vellum, see our help docs here.